SUN AUGUST 14TH, 2022 2:00 PM MONTREAL, CANADA

Hey Guys,

You are reading Data Science Learning Center Premium.

If you are studying to become a software developer, engineer or data scientist, you may also want your work to have a positive long lasting impact upon the world. It may be relevant or irrelevant to you, this is not a value judgement.

You might hope the products you are building or involved with have a helpful and empowering impact upon society, people and human beings?

I am pretty curious about A.I. for Good, I recently created a discussion thread around it here.

https://aisupremacy.substack.com/p/discussion-thread-what-are-the-best/comments

Is A.I. for Good real?

Like the CSR movement or the ESG movement, we have to differentiate how much of this hype and how much of it is real.

If we were to believe McKinsey, Microsoft or the World Economic Forum’s agenda, we’d basically think A.I. for good was all around us. But with so much PR, lobbying and influence on media, companies that have a significant part of their revenue around A.I., might also hype up A.I for Good for personal gain and their own potentially elitist agenda.

As Hyundai makes its AI center in the Boston area it makes me wonder. I have noticed many non-profit labs in A.I. are mostly influenced by bigger corporate entities and turn out to be something quite different.

Microsoft defines its A.I for Good as the following:

Providing technology and resources to empower organizations working to solve global challenges to the environment, humanitarian issues, accessibility, health, and cultural heritage.

The WEF assures us that: The digital, data and artificial intelligence (AI) dividends of the fourth industrial revolution will create better healthcare outcomes.

Microsoft’s AI for Good video.

The World Economic Forum believes A.I. is helping us to better understand our past and help plan for our future with climate change as well.

While the world lacks A.I. ethics and regulation, we have some vague notions that A.I. can be used for good, and not just for the benefit of shareholders. This idea is helpful public relations for companies that are centralizing A.I. talent and products for their own business models.

A.I ‘Hype’ Distracts from the Nefarious uses of A.I.

The World Economic Forum is a place where the economic elite of the world debate about the business world and have their own ideological, political and commercial motivations for doing so.

The case can be made for A.I’s impact on society for good or for ill, however you want to frame it. I do not know what the positive benefit of Advertising has been on society, but I do know the Cloud, E-commerce and data analytics are extremely helpful for global productivity and GDP around the world.

There are clearly aspects of algorithms and even dumb A.I. that have been extremely harmful yet the main entities involved have barely been fined, have likely not been taxed properly, and none of their executives or employees have been liable for damages. It’s this discrepancy of reality and hype that’s most troubling. There is hardly any rule of law around the impact of A.I. at scale or for the firms that abuse it and weaponize algorithms to their extremes that the Ad-model best exemplifies.

A.I., Big Data, data analytics, machine learning, software development - are not neutral subjects, they actually empower corporate motivations and commercial strategies that can harm or empower people, communities and institutions, and society as a whole, in the real world.

A.I. for Good

With AI adoption on the rise, the technology is addressing a number of global challenges. But is it really helping to solve humanity’s biggest problems or simply creating new ones?

When the World Economic Forum or Microsoft only focus on the positive of A.I., they are telling skewed story about the history of A.I. on capitalism, social equality, democracy, human rights and the health of free-market competition and equality in capitalism.

Unfortunately America is not always the ‘good guy’, just as China is not always the ‘bad guy’. American propaganda is everywhere. Embedded in the architecture BigTech has on our understanding of media via their platforms.

The political rants on Twitter are for the most part American-centric. The coverage of the News on LinkedIn is again, skewed to the benefit of Silicon Valley and Microsoft’s partners. I’m based in Canada, we aren’t necessarily always taking a pro-American or pro-Silicon Valley position.

A software engineer or a data scientists will have limited impact on a company’s real behavior in the business world regarding the monetization of A.I., software, machine learning and their core business models in the Cloud, digital Advertising and related products. We are cogs in the machine. Our job is not to question the stakeholders at Google, Amazon, Microsoft or Apple. If you do so at Google’s A.I. ethics, it gets you fired. If you question warehouse conditions at Amazon, you might face legal repercussions. If you question Microsoft’s defense contracts, good luck to you.

Artificial intelligence is powering impressive advances in many industries, so ensuring that AI systems are deployed responsibly is an urgent challenge. But is Silicon Valley among others, really taking this seriously?

Are Governments or their regulatory bodies? Antitrust regulation is nearly completely absent from American Capitalism since the advent of the internet. Without checks and balances, what really becomes of this fabled powerful and ubiquitous A.I.? We are in a social experiment in the 21st century, where our children may discover eventually what it leads to.

Surveillance Capitalism vs. A.I. for Good

It’s very easy to ignore the weaponization of A.I. and hype the A.I. for good.

I will tell a personal story here on my experiences on Substack.

I get more subscribers and paid supporters when I do this, but it’s not accountability journalism, it’s just perpetuating the mainstream news narrative.

The more critical I am of BigTech, the more paid subscribers decide to cancel their support. Even on Substack, this is what is happening!

Since I am dependent on this income, this has made me stop and think about the culture of media we are perpetuating.

I don’t really believe there are many powerful examples of A.I. for good. The convenience of Amazon or the utility of Google is not compelling. The destruction of their competition is not something that has been good for society.

Microsoft’s compelling software services monopoly and operating system domination, hasn’t elevated us. Apple’s high prices or iPhone movement hasn’t created a better world.

AI is not a silver bullet says McKinsey, but it could help tackle some of the world’s most challenging social problems. But how motivated are our most powerful commercial firms at making the world a better place really? Nobody asks the question.

All major BigTech platforms give in to the lure of advertising and Cloud, since they are simply so profitable with margins that you cannot find elsewhere and TAMs that are so significant on a global level. As Apple thinks more about advertising in the 2020s, so will ByteDance offer an enterprise Cloud computing service competing directly with Alibaba, Baidu, Huawei and others.

What is AI for Good?

Is A.I. for good having a responsible A.I. program and A.I. ethics guidelines or is it something else?

Microsoft seems to think A.I. for Good are fringe projects where they give back to the community:

AI for Earth

AI for Health

AI for Accessibility

AI for Humanitarian Action

AI for Cultural Heritage

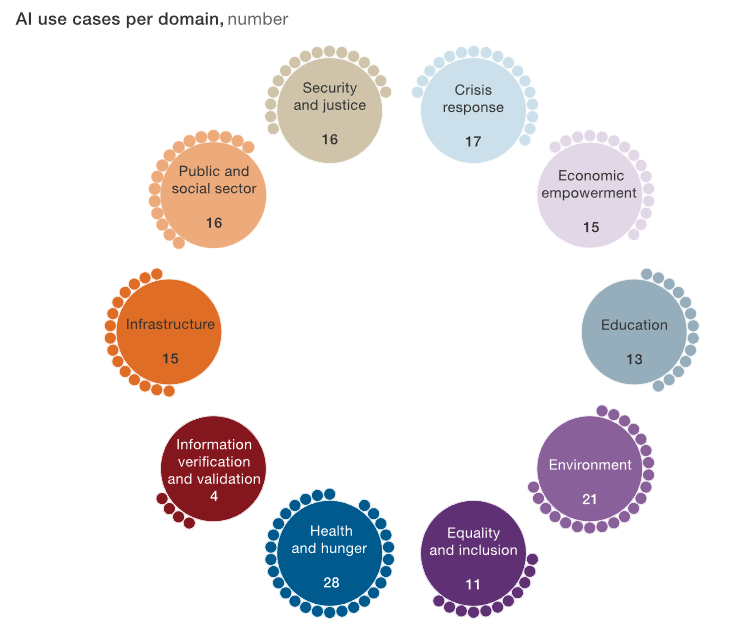

McKinsey thinks it’s an even broader realm of “global challenge” solutions:

Public and social sector

Infrastructure

Information verification and validation

Health and hunger

Equality and inclusion

Environment

Education

Economic empowerment

Crisis response

Security and Justice, among many others.

As it stands in 2022, I cannot help feel that Governments and BigTech care more about national defense, population surveillance and the use of algorithms for their own interests than A.I. for good projects that actually empower people.

I hope I am wrong about this.