Who Banned ChatGPT lately?

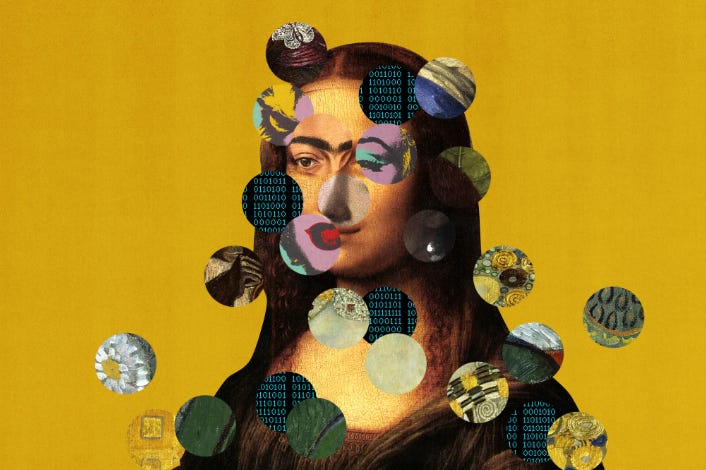

A.I. Risk at the intersection of a wildly-hyped demo.

Hey Everyone,

So ChatGPT was impressive for some individuals who wanted hacks to be more productive, I get that. But when many organizations and online communities are banning its use, it’s a full blown crisis of A.I. alignment.

That’s not of course a story the mainstream media wants or is good at covering.

As someone who believes in A.I. ethics, I don’t think unleashing something that isn’t ready too early into the wild is a great idea. Generative A.I. basically means a lot more spam and unreliable content. We’ve already been struggling with content moderation at scale, with A.I. systems that aren’t able to catch all the bad actors.

Famously a lot of School boards have to re-write their rules around students using ChatGPT, as if a supposedly forthcoming ChatGPT watermark in the works can save them?

New York City public schools ban access to AI tool that could help students cheat

What you may not realize, it’s banning ChatGPT is becoming a bit of a trend in early 2023.

Top AI conference bans use of ChatGPT and AI language tools to write academic papers

One of the world’s most prestigious machine learning conferences has banned authors from using AI tools like ChatGPT to write scientific papers, triggering a debate about the role of AI-generated text in academia.

The International Conference on Machine Learning (ICML) announced the policy earlier this week, stating, “Papers that include text generated from a large-scale language model (LLM) such as ChatGPT are prohibited unless the produced text is presented as a part of the paper’s experimental analysis.”

Meanwhile real artists are also being banned for having a style that resembles the text-to-image art. The Art subreddit on Reddit has permanently banned on artist solely because their style looks similar to AI art, or at least that’s what one mod thinks.

Remember even back in December Stack Overflow had to ban ChatGPT, for the pollution and inaccuracies it was giving. While that story was pushed under the rug in 2022, now in 2023 we are seeing a lot more organizations, communities, online forums and institutions having to ban ChatGPT. Not because it’s amazing, but because the tool actually pollutes, infringes or devalues those communities and their goals.

So let’s not all be bullish at the same time for the emergence of GPT-4 and better RLHF Conversational A.I, we are a long ways from massive scale. There’s good reasons Google didn’t release LaMDA to the public, even when its employees claiming for fame or whatever that it was practically sentient. We are nowhere near AGI, and it’s important to project fact-verified realities and not just the hype spin.

Why do you suppose ChatGPT is needing to be banned in 2023? It’s just a limited demo stuck in 2021 data, just learning to optimize better with human feedback. It’s not the product that we’ll experience later this year on Bing. Most of the A.I. writers I see have been programmed to walk the hype via clickbait but I’m uncertain how much I want to jump on that bandwagon. Even as an indie journalist, I’m more interested in fair and balanced coverage and accountability journalism.

ChatGPT and Conversational A.I. is hard, it’s not simply a transformative product that is good for the internet and everyone who uses it. Sam Altman has the heart of a venture capitalist, he’s not interested in his product being perfect before its released, he’s interested in how much his company is valuated at.

It's just January 7th, but ChatGPT is getting banned a lot in 2023 already. What does it mean for Microsoft Bing and GPT-4? Deliverance or mistrust in the hype? I'm reading some interesting points of view on Reddit. With A.I. we have to be long-termism and not give in to the temptation to join the choir. You can be an optimistic and a realistic at the same time, they don’t have to be mutually exclusive.

On LinkedIn people are trying to rationalize how OpenAI might indeed be worth that much? It’s fun to throw your hat in with the Big boys club, I get it. But the truth also matters. One writer literally claimed that the size of GPT-4 was much higher than more rational estimates indicated, which created an entire wave of misinformation around GPT-4, so I had a front seat to all of this and it was all a bit shocking to me.

People assume innovation has some kind of defacto right to disrupt and pollute our communities or that we should accept the “spirit of innovation” (mainly just pushed by BigTech) as some kind of progress of the scientific paradigm, but that’s not all that’s actually going on.

A.I. researchers are actually interested in democratizing A.I. with projects like Bloom and others. Open’s DALL-E 2 weren’t as open as Midjourney or Stability.AI. ChatGPT it appears will be a Microsoft Bing product, supposedly enabling them to compete with Google. Google for itself now may have to launch their own demo of LaMDA (likely before it’s even ready for public consumption). Google executives were seriously worried about the reputational risk, this is a red flag for A.I. Safety.

Keep reading with a 7-day free trial

Subscribe to Machine Economy Press to keep reading this post and get 7 days of free access to the full post archives.